There’s a website that’s been in the news a lot recently, This Person Does Not Exist. This website generates a new human face upon every refresh, using a Generative Adversarial Network (GAN) to do so (or more precisely it uses StyleGAN, created by Tero Karras, Samuli Laine, Timo Aila). The faces on here are very realistic, you wouldn’t know that you weren’t looking at a photograph of a real human unless you were primed.

The above face is generated by the website. The face isn’t perfectly symmetrical, the features are all proportional. The only things that look a bit off are the skin texture, and the slight halo around the head. The lack of background, although not suspicious, can be an indicator that an image is generated by an AI.

Realistic backgrounds add another layer of complexity (unless you want to just overlay the head on an existing photo), and so they seem to often be left out by GAN created images. This is probably because there’s a lot less consistency in backgrounds than their is in faces, and so combining multiple images leads to a colorful blur. Another indicator I’ve noticed is shade; GANs don’t really seem to draw shadows cast across a face, whereas real photos may contain them.

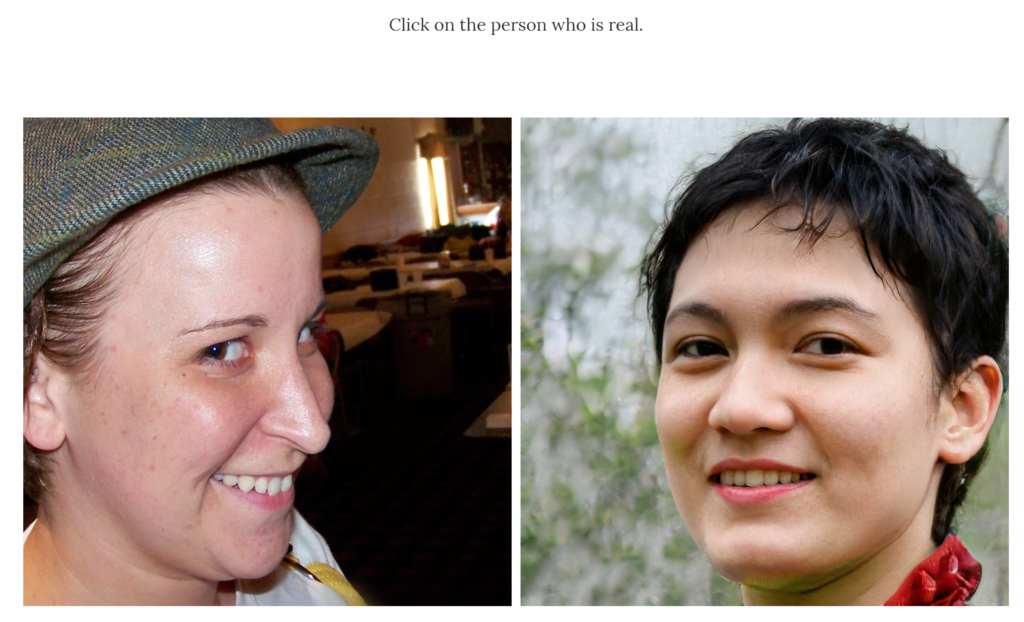

To demonstrate how realistic these images are, Jevin West and Carl Bergstrom from the University of Washington have created another website, Which Face Is Real. This features one GAN face, and one real one. You have to guess which is real.

The above example isn’t too difficult; the image on the left has a complex background, and the one on the right does not. The hair is also a bit weird in the face on the right. But, at a glance, both look like real humans.

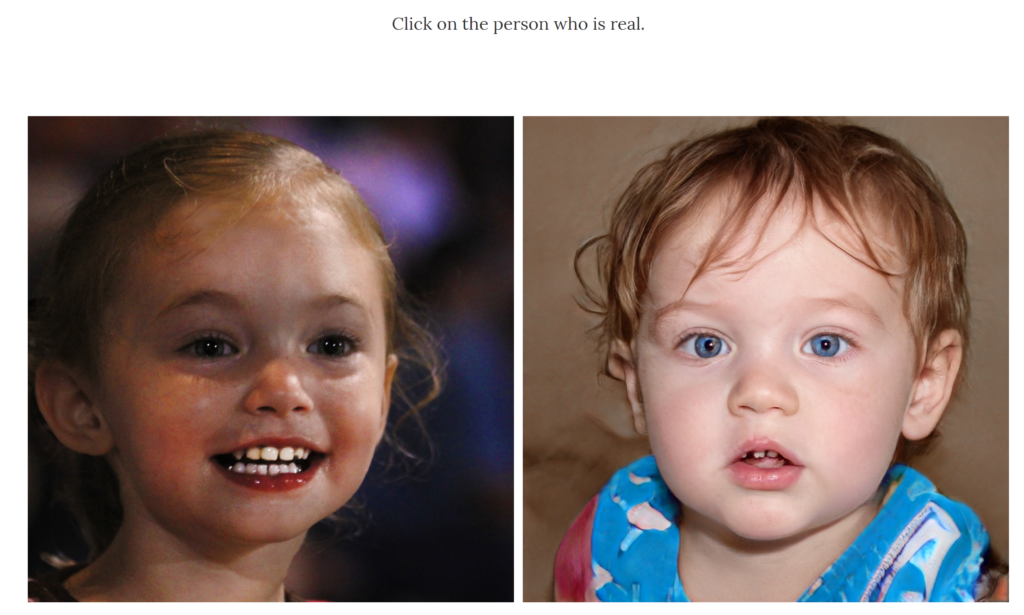

Interestingly, the StyleGAN implementation being used by the above website can generate images of kids.

Children tend to be under-represented in training data, and so it’s interesting that this system has enough of a resource bank to be able to generate them. Incidentally, the image on the right is the fake one; the textures are generally a bit off, especially around the scarf and hair.

So, these pictures are pretty convincing to the untrained human eye. How are they for facial detection systems?

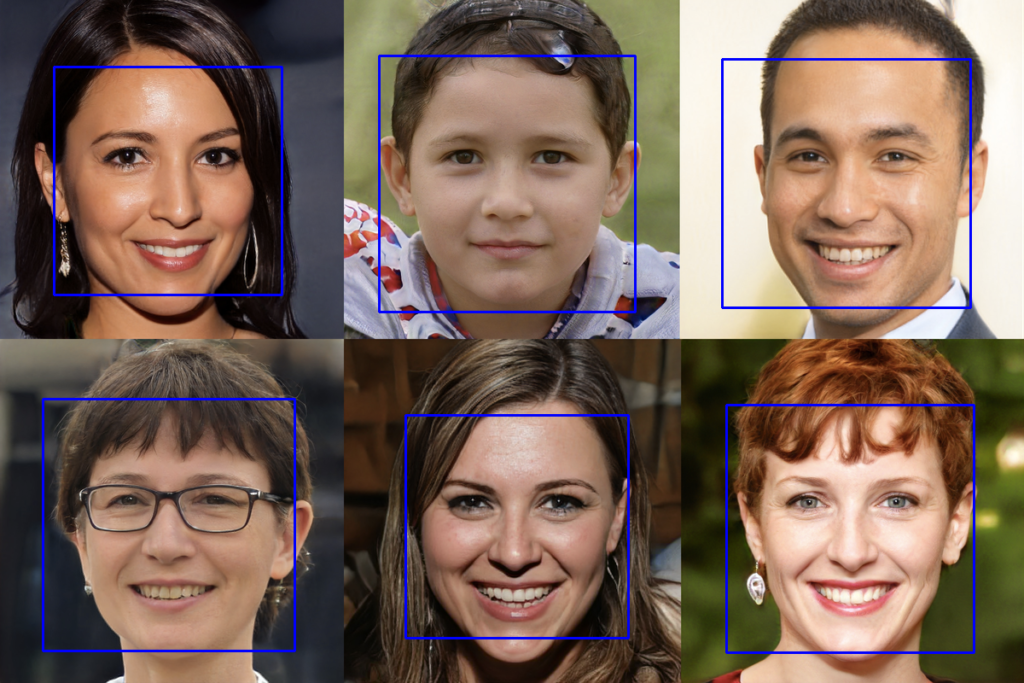

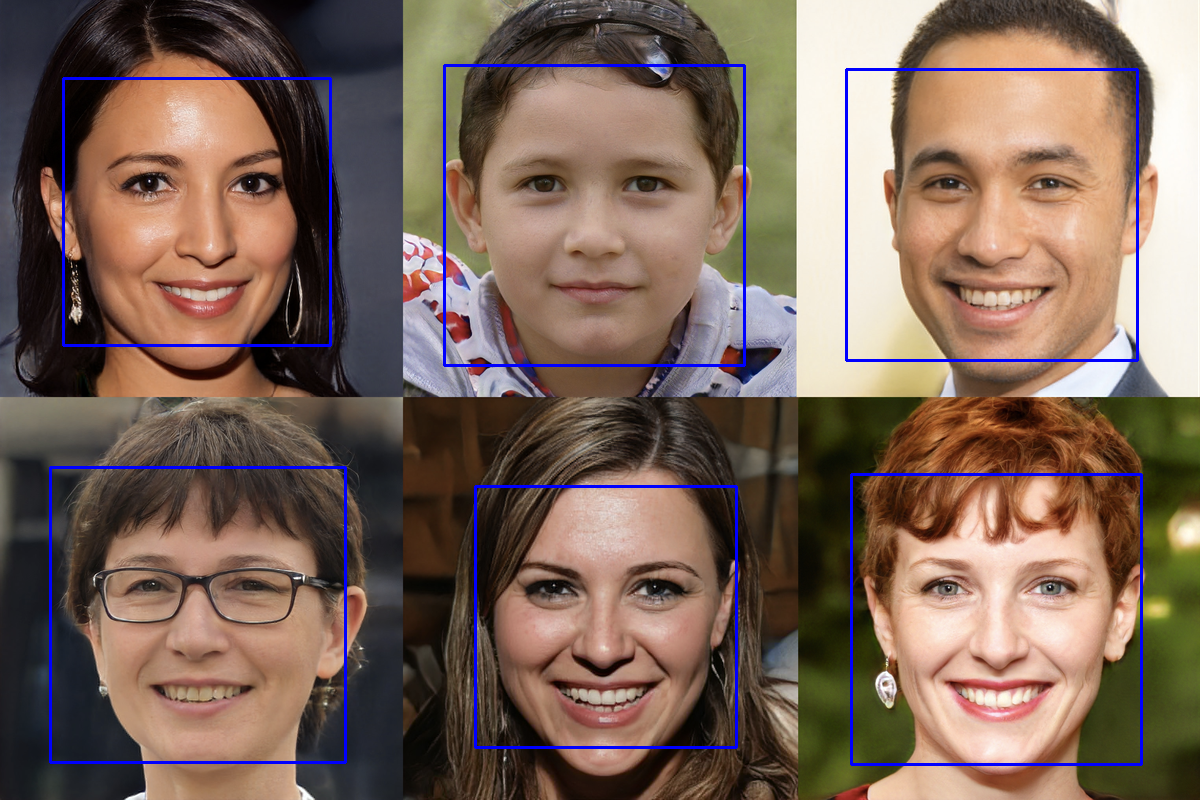

Generated image compilation from: https://www.theverge.com/tldr/2019/2/15/18226005/ai-generated-fake-people-portraits-thispersondoesnotexist-stylegan

OpenCV had no issue with detecting faces in these StyleGAN images. Why would it? They’re not adversarially designed to fool classifiers. Still, this does make the images quite useful- they could be used to generate training image sets for other facial detection systems. This could help us to create more representative training sets, and counter some of the legal issues surrounding data storage (and implementation-specific permissions to use images).

There are however risks stemming from more nefarious uses. It would be much easier to catfish (pretend to be someone else on the internet) someone with an image faked by this system, as a reverse image search won’t bring up any results. Generally, these images are perfect for the creation of fake profiles. Websites already exist which can generate fake bios for fake accounts, and generating email addresses isn’t hard. With the addition of easily accessible fake profile pictures, a completely automated profile generation system could be implemented which is very hard to detect. As with many AI things, this will likely lead to an arms race with social media websites which want to tackle the issue of bots.

With the rapidity at which image/video faking technologies seem to be being developed, the issue is likely to only get worse; any discriminator which can detect the fakes can be used to feed in to the GANs, leading to even harder to detect fakes being created. Where we’ll end up I cannot say, but I’m certainly interested to see how the future turns out!

[…] Have concerns about introducing real data in to a system that you are unsure is trustworthy? Artificially generated data may be able to help you. Whilst there’s a whole range of other concerns about how easy it has become to synthesise data, it can be great for training and testing other systems. I’ve previously written a blog post about the faces which can be generated using This Person Does Not Exist (blog post here: https://www.vicharkness.co.uk/2019/03/25/this-face-does-not-exist/). […]